PlotTwist: A Creative Plot Generation Framework with Small Language Models

Abstract

Overview

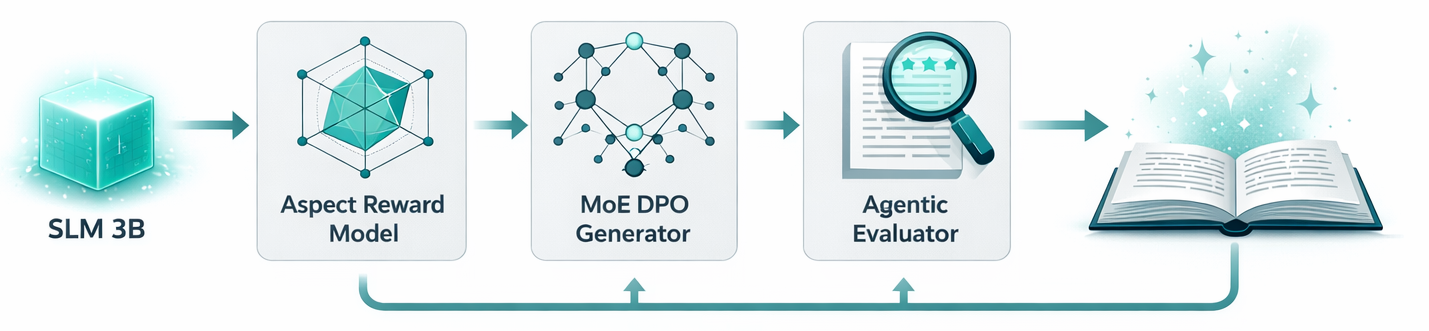

Creative plot generation presents a fundamental challenge for language models: transforming a concise premise into a coherent narrative that sustains global structure, character development, and emotional resonance. We present PlotTwist, a structured framework that enables Small Language Models (SLMs) with 5B active parameters to generate high-quality, premise-conditioned plots competitive with frontier systems up to 200 times larger. Our approach decomposes generation into: (1) an Aspect Rating Reward Model trained via Positive-Negative prompting across five Narrative Quality Dimensions (NQDs); (2) a Mixture-of-Experts plot generator aligned via Direct Preference Optimization; and (3) an Agentic Evaluation module that emulates human critical judgment. Together, these components yield consistent improvements across all five NQDs, demonstrating that careful decomposition and alignment can close and in many cases surpass the quality gap between small and frontier language models on creative tasks.

"We replaced model capacity with structured workflow and the plots got better."

Abhinav et al., 2026Method

The PlotTwist Framework

Evaluation

Five Dimensions of Narrative Quality

Rather than a single "quality" score, PlotTwist evaluates every plot across five Narrative Quality Dimensions (NQDs), each targeting a distinct failure mode that makes stories feel broken. Scores are calibrated via the Aspect Rating Reward Model, trained to separate genuine craft from surface-level fluency.

MoE routing contributes the largest single gain (+0.78 NQD points). DPO alignment refines further, and Positive-Negative prompting solves the reward-noise problem that would otherwise prevent preference learning from converging.

PlotTwist (3B) on held-out premises. Scale: 1 to 5.

The evaluator correctly separated great from terrible across every NQD before being trusted to judge PlotTwist outputs.

Experiments

Key Results

| Model | Params | NQD Score | Rank |

|---|---|---|---|

| PlotTwist | 3B | 8.74 | 1 |

| GPT-4.1 | ~1.8T | 8.41 | 2 |

| Claude 3.5 Sonnet | ~200B | 8.29 | 3 |

| Gemini 1.5 Pro | ~340B | 8.12 | 4 |

| Llama 3.1 70B | 70B | 7.88 | 5 |

| Mistral 7B (base) | 7B | 7.03 | 6 |

Qualitative Analysis

Sample Generated Plots

Eleanor's hands tremble as she lifts the yellowed score from the piano bench, sixteen bars in her daughter's unmistakable hand, then silence on the staff. Each night she sits at the Steinway, the notation blurring through tears she refuses to shed, bargaining with memory as much as music. When a young conservatory student discovers the manuscript online after Eleanor photographs it by accident, the world's hunger for closure forces her to confront whether completion is love or erasure, and whether grief's truest monument is sound or its deliberate absence.

Dr. Mara Voss flags the note's subordinate clause inversions before she registers whose they are: hers, from Chapter 9 of The Quiet Hostage, a novel she thought was buried. Someone has studied her fiction like a playbook, and the kidnapped girl's life now runs on a clock set to Mara's own plot structure. Racing to predict the next chapter before her shadow self does, she must expose her pseudonym and the desperate decade that spawned it to the only detective who might believe a thriller novelist is simultaneously the key witness and the unwilling co-author of a real crime.

Reference

Citation

@article{thorat2026plottwist, title = {PlotTwist: A Creative Plot Generation Framework with Small Language Models}, author = {Thorat, Abhinav and Kolla, Ravi and Goel, Jyotin and Pedanekar, Niranjan}, journal = {arXiv preprint arXiv:2603.16410}, year = {2026} }